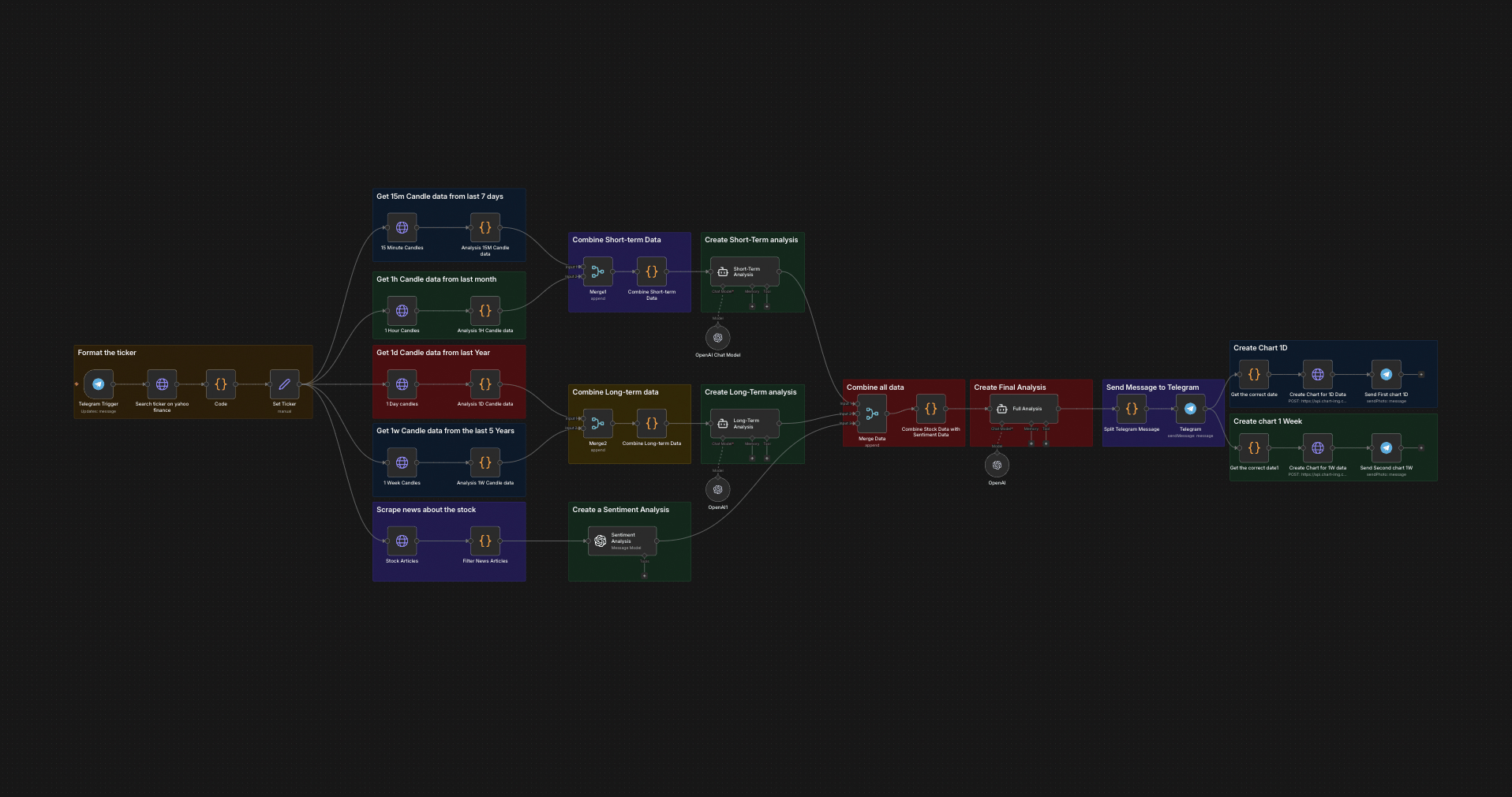

The full n8n canvas as it runs in production.

An Hour Per Ticker Is Not Scalable

Independent traders and small fund analysts spend most of their day on the same workflow — pull a chart, read recent news, check earnings, scan sentiment, write a one-paragraph thesis, decide. Per ticker, that's about an hour of work. For an active trader covering 8-10 names, that's the entire day before a trade is even placed.

The mechanical part is most of the time. Pull data from Yahoo Finance. Read CoinDesk or Bloomberg for headlines. Check Finnhub for analyst ratings. Synthesise into a coherent view. The analytical judgement only kicks in once everything is collated.

Two failure modes break the manual sweep. First, missing context because one source wasn't checked. Second, anchoring on stale data because the trader pulled the chart 20 minutes ago and the market moved. Both lead to bad decisions.

This system pulls everything in parallel and synthesises in 30 seconds. The trader sends a ticker to Telegram. The bot returns a structured analysis with multi-timeframe price action, headline sentiment, earnings calendar, recent news, and a one-paragraph thesis. The trader reads, decides, acts. Coverage scales from 8 names a day to 30+ on the same time budget.

From Ticker to Thesis in 30 Seconds

Built on n8n. Telegram bot is the front-end. A ticker query triggers four parallel data legs — Yahoo Finance for multi-timeframe price action (1d/1w/1m candles), Finnhub for analyst ratings and earnings calendar, Tavily for recent news search, and Reddit/Twitter sentiment via configured search.

GPT-4o-mini reads all four data feeds and synthesises a structured response — current price, key levels, recent news summary, sentiment score, earnings flags, and a one-paragraph trade thesis. Output ships back to Telegram in under 30 seconds. The trader optionally requests deeper drilldown on any section.

From Ticker Query to Synthesised Analysis

Telegram Query

Trader sends a ticker (or 'AAPL', '$TSLA', 'analyse Microsoft') to the Telegram bot. The query parses to extract the ticker and any modifiers (timeframe, depth, specific question).

Parallel Data Pull

Four data legs fire concurrently — Yahoo Finance for price/candles, Finnhub for analyst data and earnings, Tavily for news, Reddit/X sentiment search. Total pull time bounded by slowest source (~8s).

Sentiment Scoring

GPT-4o-mini scores recent headlines and social posts on a -10 to +10 scale. Weighted by source credibility and recency. Outputs a single sentiment number plus the top 3 driving headlines.

Multi-Timeframe Pattern Detection

Price action analysed across 1d/1w/1m timeframes. Key support and resistance levels identified. Recent volume anomalies flagged. Output is a structured object.

Thesis Synthesis

GPT-4o-mini reads all the structured data and writes a 4-6 sentence trade thesis — current setup, key risks, key opportunities, plus a confidence score. Prompted to be honest, not bullish.

Telegram Delivery

Structured response ships back to Telegram with markdown formatting, key levels in a table, and clickable links to the source data. Drilldown queries ('show me the news' / 'why is sentiment negative') trigger follow-up workflows.

What This Bot Does That Manual Synthesis Can't

30-Second Turnaround

Full multi-source analysis in under 30 seconds per ticker. Compare to 60+ minutes manual. Coverage scales 4-5×.

Multi-Source by Default

Price action, news, sentiment, earnings — all in one query. No tab-switching, no source juggling, no missed feeds.

Honest Thesis Generation

The model is prompted to be honest and risk-aware. Not bullish. Not fearmongering. Outputs a balanced view with confidence calibration.

Drilldown Queries

Follow-up questions ('show me the news', 'compare to MSFT', 'what are options doing') trigger deeper dives without re-running the full pipeline.

500+ Ticker Coverage

Works on US equities, major ADRs, crypto, and most ETFs. Configurable to cover specific markets or asset classes.

Configurable Risk Lens

The thesis prompt can be tuned for different trading styles — momentum, value, swing, day. The same data feeds drive different outputs depending on rubric.

Before vs. After: What Changes When Synthesis Runs in 30 Seconds

Trader covers 8 names per day. Each takes 50-60 minutes to research properly. By 14:00, only 5 are done. The other 3 trade on stale or shallow analysis. Two of those positions go wrong because the trader missed an earnings warning that came out at 10:30.

Trader covers 30+ names per day. Each takes 30 seconds to get a structured analysis. The trader spends the saved time on judgement and risk sizing — not data collation. Pipeline of trade ideas tripled. Coverage goes from one sector to four.

Live in 3 Weeks

Days 1-3 — Data Source Selection

Confirm which data sources the trader trusts (Yahoo Finance, Finnhub, Bloomberg if accessible, custom feeds). Wire API credentials. Verify rate limits and cost.

Days 4-10 — Parallel Pull and Sentiment

Build the four parallel data legs. Wire Tavily and Reddit sentiment search. Tune the GPT-4o-mini sentiment scoring prompt against historical examples the trader scores manually.

Days 11-15 — Thesis and Multi-Timeframe

Build the multi-timeframe pattern detection. Wire the thesis synthesis prompt to the trader's risk lens. Iterate against test queries to match the trader's framing.

Days 16-21 — Calibration and Drilldown

Two-week pilot in parallel with manual research. The trader sends queries and compares the bot output to their own analysis. We tune the prompt where they diverge. Drilldown queries wire in by week three.

The Right Fit — and When It Isn't

Right fit for independent traders, small fund analysts, and research firms covering equities or crypto with ~10-50 active names. Works best when the trader has clear views on what they want analysed (the rubric).

Not a fit for high-frequency trading where 30-second latency is too slow. Not a fit if the trader's edge is in proprietary data the model can't access — the bot is for synthesising public signals, not generating alpha from private feeds.

Frequently Asked Questions

Does this give buy/sell recommendations?+

It outputs a structured thesis with confidence score and key risks. The trader makes the final call. We deliberately avoid 'BUY' / 'SELL' labels to keep judgement with the human.

How accurate is the sentiment scoring?+

We benchmark against trader-scored examples during onboarding. Production accuracy sits at 85-92% agreement with the trader's own scoring. We retune quarterly as language patterns shift.

Can it cover futures, options, or fixed income?+

Equities and crypto by default. Futures and options work with additional data feeds. Fixed income is harder because clean data is scarce — possible but more expensive to build.

What's the cost per query?+

About $0.03-$0.08 per query depending on depth. Budget around $50-$150/mo for an active trader covering 30 names a day. Far cheaper than a Bloomberg terminal.

Stop spending an hour per ticker on manual chart-and-news sweeps.

Book a Pipeline Audit. We'll scope the data sources you trust, define your analysis rubric, and quote a fixed-price build for your trading workflow.